Guard with jealous attention the public liberty. Suspect everyone who approaches that jewel. — Patrick Henry

This week, the governor of Connecticut signed a bill (H.B. 5468) imposing serious restrictions on home education. Connecticut had long been one of the best states for homeschooling freedom, but with the stroke of a pen it has become one of the worst. If you can reach this HSLDA site, the front page is a good summary of the immediate problem.

Bottom line: There are other new requirements that are objectionable on their own, but the greatest threat involves the Department of Children and Families (DCF). I've lived through enough years of fighting for parental and child rights to get chills down my spine and knots in my stomach at the mere mention of such organizations. Their "better safe than sorry" excuse has been responsible for tearing even very young children from loving, stable homes, and separating nursing infants from their mothers—for days, weeks, or even months, while the system wheels grind slowly. So it's no wonder I get queasy at the thought of handing them the authority to take away a family's right to home education.

H.B. 5468 would ... require that families seeking to withdraw their student to homeschool be checked against the DCF registry. The registry includes not just confirmed abuse, but a wide range of “neglect” findings, some of which are minor, disputed, or entirely unrelated to a parent’s ability to educate their child. The bill also bars homeschooling for any family that shares a household with someone who has an open DCF case—even if the case has not been substantiated.

This expansion sweeps in an enormous number of families. Research cited by the National Coalition for Child Protection Reform shows that 25% of all Connecticut children, 34% of Hispanic children, and 42% of Black children will, at some point in their lives, live in a household with an open DCF case—overwhelmingly because of reports later found to be false or unsubstantiated.

Connecticut’s definition of “neglect” is broad and subjective. A parent can be placed on the DCF registry if a caseworker has “reasonable cause to believe” a child was neglected—a standard well below even the preponderance of evidence threshold used in civil court.

Our litigation counsel has reviewed the bill and concluded that it raises serious constitutional concerns under both the Due Process Clause and the Free Exercise Clause of the First Amendment.

This isn't just about homeschooling. These weapons have been used in issues related to breastfeeding, to COVID vaccine compliance and a variety of other health issues, to nutritional choices, even to controlling a child's access to television. And parents will submit to almost anything to get their children back. This law puts families at the mercy of disgruntled neighbors—and vindictive ex-spouses. There is no "bail" for these families, no right to a speedy trial, no protection against cruel and unusual punishment, and certainly no right to be considered innocent unless proven guilty.

For too long we've taken our hard-won homeschooling freedoms for granted—all our freedoms, for that matter. What just happened in Connecticut is a grim reminder of how easily the most basic of human rights can be taken away under conditions of complacency.

The condition upon which God hath given liberty to man is eternal vigilance. — John Philpot Curran, 1790

In a letter from my father, 6 April 1985

You mentioned some time back that so many of the AT&T fathers, and mothers too, seemed to have minimal interest in doing things with their children and seemed almost to regard their children as an unwanted product of their marriage. I can only comment that I can think of no activity that pays better dividends than the time spent with the children.. I can comment from experience that whatever time you spend can be returned many times over.

I can attest that his life was in perfect alignment with his words.

Permalink | Read 292 times | Comments (0)

Category Children & Family Issues: [first] [previous] [next] [newest] Glimpses of the Past: [first] [previous] [next] [newest]

Forevergreen is a new, short, animated film. I know nothing about it except that a friend recommended it, and I found it enjoyable and moving. I belive this preview (of the entire film) will only be available for free for a week, so if you're interested, watch it soon. It's 13 minutes long.

Permalink | Read 615 times | Comments (1)

Category Children & Family Issues: [first] [previous] [next] [newest] Conservationist Living: [first] [previous] [newest] Inspiration: [first] [previous] [next] [newest]

I had an idyllic childhood. Not perfect, but as close to that as anyone I know. And one of the best parts, I now realize, was growing up with engineers.

Schenectady, New York, where I spent the first 15 years of my life, was the home of the General Electric Company. As it did the skyline, GE dominated our lives, night and day. My father was an engineer, as was his father, and his father's father, and he worked in GE's General Engineering Laboratory. My parents met there, where my mother, a mathematician, also worked. Many of our closest friends were engineers or employed in related fields. Schenectady in those days was a hotbed of science-and-engineering-types, and as far as I could tell, everyone I knew was smart. Smart was normal, and not just among the scientific folks. I suspect that living in Schenectady in its heyday resembled what I imagine living in Silicon Valley or the Seattle area is today.

I'm not talking about genius-level, though there were certainly some of those, but rather down-to-earth people with practical experience and skills, who also read a lot and loved to have discussions about ideas and on just about any subject.

Not that I appreciated it at the time as I should have; I took it for granted. But my recent "archivist" work with my father's journals and other writings has made me realize what a blessing it was to grow up in such a community. They even had their own way of speaking: their conversations were filled with jokes and wordplay, exaggerated and understated language, and what I realize now was an advanced everyday vocabulary. It wasn't until I had had much more experience outside of our parochial bubble that I realized that there are many people who find what I consider a normal vocabulary to be a sign of arrogance, who take the exaggerated/understated language literally, to the point of misunderstanding or even offense, and who either don't understand or don't appreciate the humor.

It takes all sorts and conditions of men to make a world, many of them wonderful. If you understood the "sorts and conditions of men" reference, you are part of a different distinct community, one I did not come to recognize and love until some 30 years ago. Appreciating other cultures and especially loving one's own are not mutually exclusive conditions.

What chiefly concerns me is that we seem to have entered an age when differences are being replaced by diagnoses. The other day, a new friend was telling me about her son, who in high school was triply exceptional: He excelled in academics, in music, and in sports. It's not uncommon to see people who do very well in two of those areas, but three is a rarity. At one point, he was moved to express the concern that he might be "on the spectrum." What social pressures drive an obviously intelligent, capable, and well-rounded child to label himself with a diagnosis? Why do teachers and doctors (and even parents) put so much effort into finding boxes into which they can squeeze children?

As I told my friend, in my day, in my world, we used another term to describe being "on the spectrum."

We called it normal.

Permalink | Read 472 times | Comments (1)

Category Children & Family Issues: [first] [previous] [next] [newest] Random Musings: [first] [previous] [next] [newest] Everyday Life: [first] [previous] [next] [newest] Glimpses of the Past: [first] [previous] [next] [newest]

It often pays to find the story behind the headline—or the social media post.

The Facebook blurb from our local library was annoying. Here's how it began:

1000 BOOKS BEFORE KINDERGARTEN: A round of applause for A----, who has read another 1,000 books before kindergarten—for the third time! On her way to becoming a young, brilliant scholar, Seminole County Public Libraries is proud to be part of A----’s journey as she grows her vocabulary, boosts her critical thinking, and enhances her cognitive development.

I was annoyed, but looked further and discovered that our library has a program to encourage parents to read aloud to their preschool children. It's a little sad that adults need stickers as an incentive to read to their children, but we've all been there with some good habit we're trying to acquire. So it looks like a good thing.

What bothered me about the Facebook post was the implication that the achievement belonged to the child, and that she had actually read 3000 books. I've known a few kids who taught themselves to read before formal schooling, but that's not what is meant here. Maybe I'm being pedantic, but I don't think so: As valuable as being read to is, it is not the same as actually reading the words on the page.

I have a similar problem with adults who claim to have read a book when actually what they've done is listen to someone else read it to them. Don't get me wrong; I enjoy audio books and find them invaluable for absorbing content while doing repetitive work, exercising, and driving. But that's a different experience from reading, even for adults, and especially for a child who hasn't yet developed the skill of decoding print.

And I'm not sure it's a good idea to let a child believe he has done something worthy of great praise when the effort was on the part of his parents. Sort of like getting an A on a homework assignment done 95% by your dad. Or getting a Pulitzer Prize for something written by ChatGPT.

Curious about the quantity of books, I looked up my own reading tally. I have been keeping track of the books I read since 2010 (making note of the few that were audiobooks). I consider myself an avid reader, but it took me until mid-2024 to reach the 1000-book mark. So I commend all the parents who achieved that in reading to their preschool children.

Then again, from what I can tell, my just-turned-two granddaughter is probably nearing the 750 mark with Sandra Boynton's Barnyard Dance, including many repetitions of turning the pages herself and telling the story as she remembers it, complete with stomps and claps.

And no, I don't include books read to grandchildren in my own reading list, even though I don't limit myself to adult-level reading.

It is usual to speak in a playfully apologetic tone about one's adult enjoyment of what are called "children’s books." I think the convention a silly one. No book is really worth reading at the age of ten which is not equally (and often far more) worth reading at the age of fifty—except, of course, books of information. The only imaginative works we ought to grow out of are those which it would have been better not to have read at all. — C. S. Lewis, On Stories

When was the last time you were threatened by a gang of thugs wielding spiralizers? Apparently that is a danger in the United Kingdom. Either that, or the chefs' union is lobbying hard to keep teens from trying to break into their business. Or possibly Bob the Tomato and Larry the Cucumber have more power than I thought.

This story, posted by a woman in the UK, popped up on Facebook, and I quickly copied the text. I regret not catching the image, too, as that would have shown how small, unpowered, and insignificant the device in question is. But you'll get the idea.

I know many things are going nuts in the UK, though I don't know anyone personally who can vouch for them. But the following story, if it is true—and at least it has the ring of truth and not clickbait—shows that at least in one area they are even crazier than America, which is saying a lot.

What I want to know is… When did the world go completely mad? Did I miss it?

Let me explain…

Yesterday, I was shopping in a well-known store with a red and white logo With my 10-year-old son.

Among the impulse purchases were a red nose day Tshirt for my son, a gift for the teacher and this lovely spiralizer/vegetable grater for me.

My helpful son unloaded the items onto the checkout desk while I removed my purse from my handbag, at which point the young lady on the desk said “I’m sorry, I can’t sell you that.”

She proceeded to explain that as the spiralizer was not to be sold to anyone under 18 and my son was the one who placed it on the counter, it was deemed that the vegetable cutting device was for him.

Bewildered, I said ”but it’s a spiralizer, and quite obviously it’s for me.”

She refused the sale.

I asked if I could purchase it separately.

She refused the sale.

I asked if I could speak to the manager and they could make allowances for such an obvious fault with the rules.

The manager refused the sale.

I told the manager it would probably be a good idea to put some sort of signage up to let customers know that minors should not be unloading shopping to help their parents.

She obviously misunderstood as she pointed out the signage on the packaging that clearly says “Do not sell to under 18s”.

I left the store confused and a little perturbed and resigned myself to a lifetime of chunky vegetables in my recipes.

You can however all rest safe in the knowledge you will never be faced with a vegetable shredding 10-year-old wearing a Feathers McGraw T-shirt roaming the streets of Cumbria… all thanks to the vigilant staff of the West Cumbrian store.

You can't sell a spiralizer to a 17-year-old in the UK?

I'm assuming a much-more-potentially-dangerous kitchen knife would meet with similar restrictions. At what age are people allowed to cook on that side of the Atlantic? How about to wash dishes, which would undoubtedly include knives.

I don't remember when I started helping in the kitchen, nor when our own children did, but I know that most of our grandchildren started learning how to use kitchen knives at the age of two (well supervised, of course), and by four were reliable helpers in cutting up vegetables for a salad. I know that's early, even for America, but if David Farragut could command a ship as a pre-teen (there is some difference of opinion as to whether he was 11 or 12), surely it is a bit excessive to restrict the use of sharp objects to those 18 and older.

Permalink | Read 680 times | Comments (1)

Category Education: [first] [previous] [next] [newest] Politics: [first] [previous] [next] [newest] Children & Family Issues: [first] [previous] [next] [newest] Everyday Life: [first] [previous] [next] [newest] Food: [first] [previous] [next] [newest]

Here are the top people in line to succeed to the office if the President is unable to perform his duties. The list goes on for quite a while, but I'm only listing the top six.

- Vice President (JD Vance)

- Speaker of the House (Mike Johnson)

- President Pro Tempore of the Senate (Chuck Grassley)

- Secretary of State (Marco Rubio)

- Secretary of the Treasury (Scott Bessent)

- Secretary of Defense (Pete Hegseth) (I know it's "Secretary of War," but hey, I'm a Conservative now. I still think of the body of water west of us as the Gulf of Mexico, and sing all the old words to hymns instead of the modern, bowlderized ones.)

Why is this interesting? It makes it obvious what a nightmare Charlie Kirk's memorial service must have been for the Secret Service and others responsible for safety concerns, and why security to get into the stadium was so incredibly strict. Trump, Vance, Johnson, Rubio, and Hegseth were all there. The president, and four of the top six in line of succession. Not to mention a fair number of other political figures, and many others who now know they are at risk of assassination for speaking their opinions out loud.

For some reason this makes me think of a couple who both worked where Porter did when we lived in Boston, back in 2001. They had a standing policy that whenever they flew out of town, they took separate airplanes. That may seem excessive, or make you ask if they never rode in the same automobile—but it is the reason why in September of that year their children were bereaved, but not orphaned.

The full list can be found here. If, like me, you wonder about the order of the Cabinet officers—for example, why the Secretary of Education is considered more likely to be a good president than the Secretary of Homeland Security—it's because the order is determined by when the agencies were created. Or maybe because educators are more experienced with herding cats; I don't know.

I'd never heard of the Church Dog books nor the church that they're associated with, but family is family, and our choir family is so proud of the young daughter of two of our singers. Our director knows a lot that's going on in the Central Florida music, church, and theatrical scenes; he recommended that she audition for the Church Dog music video that's just been released—and she won the solo part! I don't think her parents would mind my mentioning her by name, but I'm not taking any chances. If she becomes famous, I'll link to this in an "I knew her when" post.

It's not exactly my kind of music, but she's my kind of kid.

I didn't play a lot with dolls as a child, nor with trucks either. I had both, and enjoyed both, along with sundry other toys: blocks, Tinker Toys, laboratory equipment, tools, toy guns, childhood games, stuffed animals, a (real) bow and arrow set, a hula hoop, a baton for twirling—normal childhood stuff. I was eclectic in my tastes with no overwhelming preference for anything, except I suppose for reading books, climbing trees, and exploring in the woods. So, as I said, I didn't play much with dolls. But the dolls I did have were babies or young children, and they were simple, the better to encourage imaginative play.

So my heart skipped a beat when I saw what one Australian mother has done to "rescue" old, worn-out dolls of the more recent type. I never liked Barbie dolls, certainly not the Bratz and other strange-looking creatures that passed for dolls when our daughters were young. This woman brings beauty from ashes.

This seven-minute video will warm your heart. Not only watching twisted ugliness turned normal, but especially listening to little girls with much more heart and common sense than the jaded, angry toy manufacturers.

This is another post I've pulled up from my long backlog. I wrote it in 2015, when the story was new, but for some reason it languished for more than 10 years! I don't know why; the post was complete and I still love the story.

The inevitable question is, "Where are they now?" What has happened since that bright beginning? Tree Change Dolls has an Etsy site, which appears to concentrate on helping others revive their own dolls, but occasionally offers some of her own creations, which she announces on her Facebook site.

As with any good thing, there are detractors, such as the doll collectors who think she is ruining the dolls, some of which are collectable and worth money in their original form. (Though probably not when found worn-out and broken.) More disturbing are those who say they hate the Tree-Change dolls because they promote the idea of natural beauty instead of heavily made-up and sexualized children's dolls. (That's the impression I got; I didn't spend much time in that unhappy land to find out more.)

"Where does the name come from?" is the other question that intrigued me. Google Search brought up this AI answer:

A tree change is a move from an urban or city environment to a more peaceful, nature-focused rural or regional area, often inland, to embrace a simpler and healthier lifestyle. Unlike a sea change, which involves moving to a coastal area, a tree change focuses on reconnecting with the natural landscape, such as rolling hills, mountains, or countryside, to escape the pressures and fast pace of city living.

Well, that fits, but it struck a discordant note for me because that's not what "sea change" means. Here's the interesting story of the term, from Merriam-Webster:

In The Tempest, William Shakespeare’s final play, sea change refers to a change brought about by the sea: the sprite Ariel, who aims to make Ferdinand believe that his father the king has perished in a shipwreck, sings within earshot of the prince, “Full fathom five thy father lies...; / Nothing of him that doth fade / But doth suffer a sea-change / into something rich and strange.” This is the original, now-archaic meaning of sea change. Today the term is used for a distinctive change or transformation. Long after sea change gained this figurative meaning, however, writers continued to allude to Shakespeare’s literal one; Charles Dickens, Henry David Thoreau, and P.G. Wodehouse all used the term as an object of the verb suffer, but now a sea change is just as likely to be undergone or experienced.

So, a sea change is a transformation, but not specifically moving to the seaside to escape city life. However, "sea change" and "tree change" are apparently used in that way in Australia (at least on the one real estate site I checked), so the name of these dolls that have moved to a simpler, happier life makes perfect sense.

Permalink | Read 1634 times | Comments (0)

Category Children & Family Issues: [first] [previous] [next] [newest] Just for Fun: [first] [previous] [next] [newest] Inspiration: [first] [previous] [next] [newest] Glimpses of the Past: [first] [previous] [next] [newest]

Having lived through more than seven decades of holidays, I decided it would be of interest (to me, if no one else) to consider how the various annual celebrations have changed, or not changed, as I've lived my life.

As a child, I knew that holidays were about three things: family, presents, and days off from school. Not necessarily in that order—since family was the ocean in which I swam, I didn't necessarily recognize how central it was to our observances. The only celebration from which we children were excluded was my parents' anniversary. I remember being sad about that as a child, and I admire those who celebrate anniversaries as the "family birthday." What a great idea! But "date night" was unheard of in that era, and their anniversary was one of the rare times my parents would splurge on dinner in a restaurant.

Yes, folks, basically the only time we ate out was on vacations, where Howard Johnson's—with its peppermint stick ice cream—was the highlight. Solidly middle class as we were, with an engineer's salary to support us, restaurant meals simply did not fit into our regular budget. "Not even McDonalds?" you ask. Brace yourself: I was born before the first McDonalds franchise. But even when our town did get a McDonald's, the idea of paying someone to fix a meal my mother could make better at home seemed crazy.

But back to the holidays. I'll go chronologically, which means beginning with New Year's Day, which could just as well go last, as New Year's Eve. Other people may have celebrated with big bashes and lots of champagne, but we almost always spent New Year's Eve with family friends, either at their home or ours. My parents and the Dietzes had been friends since before any children were born, and by the time each family had four we made quite a merry party all by ourselves. I think the adults usually played cards, and we kids had the basement to ourselves. Of course there was that other important feature at a party: food. Lots of good food, homemade of course.

Those who didn't fall asleep beforehand counted down to the new year, and toasted with a beverage of some sort. The adults may have had a glass of champagne. One year Mr. Dietze set off a cherry bomb in the snow, which was amazing (and illegal) in the days before spectacular fireworks became ubiquitous. I miss the awe and wonder that rarity engendered. After a little more eating and talking, we gathered up sleeping children and went home. As it was the only time of the year we were allowed to stay up to such an hour, that too was a treat. Once a year past midnight is still about right for me, though sadly it didn't stay that rare.

Valentine's Day was next. This was not the major holiday it is today, and it was mostly child-centered. In elementary school we created paper "mailboxes" for delivery of small paper Valentines to our classmates; Here's an example of what they looked like. (Click to enlarge.) Some of them may have sounded romantic, but nothing could have been further from our minds. It was just a friend thing, and we enjoyed trying to match the sentiments with the personalities of our friends. Back home, if there was anything romantic about it for my parents, I missed it, being far too concerned with chocolate, and small candy hearts with words on them. Sometimes I'd make a heart cake, formed using a square cake and a round cake cut in half, and decorated with pink frosting and cinnamon candy hearts.

There were two more February holidays that no one celebrates anymore: Abraham Lincoln's birthday on the 12th, and George Washington's on the 22nd. We would get one day or the other off from school, but not both. Nowadays they've morphed into President's Day, which is in February but I never remember when because it keeps changing.

March brought St. Patrick's Day, which was bigger in school than anywhere else, chiefly through room decorations with green shamrocks, leprechauns, and rainbows with pots of gold. In elementary school, some of our neighborhood kids had formed a small singing group—we mostly sang on the bus, but one year our teacher heard about it and persuaded us to go from classroom to classroom singing what Irish songs we knew. Back then, my family didn't know we had some Irish ancestors, so as far as I can remember, the holiday never went beyond the school door.

Easter, of variable date, was of course a big deal. Unlike Christmas, it had mostly lost its Christian significance in favor of bunnies and chicks, eggs and candy. Except for when we were with our grandparents and had to dress in our Easter finery and go to church. The going to church part was okay; the finery not so much.

Easter, of variable date, was of course a big deal. Unlike Christmas, it had mostly lost its Christian significance in favor of bunnies and chicks, eggs and candy. Except for when we were with our grandparents and had to dress in our Easter finery and go to church. The going to church part was okay; the finery not so much.

We kids would put out our Easter baskets the night before, and awaken to find them filled with candy; often toys appeared also. Our baskets were sometimes bought at a store, but often homemade—I remember using a paper cutter to make strips from construction paper, and weaving them into baskets.

For me, the best part was our Easter egg hunt. None of this plastic egg business! We had dyed and decorated real hard-boiled eggs beforehand, and our parents hid them around the house, supplemented by foil-wrapped chocolate eggs, before going to bed on Easter Eve. What a blessing it was to live where it was cool enough at Easter time that eggs could safely be left overnight without fear of spoilage or melting.

Easter dinner was almost always a ham, beautiful and delicious, studded with cloves, crowned with pineapple rings, and covered with a glaze for which I wish I had the recipe. I know we did not always have a "canned ham"—for one thing, I remember the ham bone—but the experience of a canned ham was memorable, since they had to be opened with a "key" at risk of life and limb—or at least of mildly damaged fingers.

May brought Memorial Day, which was always May 30, not this Monday-holiday business. When it fell on a school day, it was a day off, which we always appreciated. There was usually a Memorial Day parade, in which we sometimes participated, with band, scout, or fire department groups. There was always something related to the real meaning of the holiday, but we kids never paid attention to the speeches. Our family was well-represented in wartime contributions, but rarely talked about them, and no one had died, so the holiday has no sad associations in my memory.

Mother's Day was in May, also; what I remember most was fixing breakfast in bed for our mother. For some reason, in those days, eating breakfast in bed was regarded as something special. I have no idea why. For me, the practice is associated with being sick, as back then children were expected to recuperate in bed for a ridiculously long time. We even had a special tray, with games imprinted on it, for sick-in-bed meals. Why a healthy adult would voluntarily eat a meal in bed is still beyond my comprehension.

Mother's Day was in May, also; what I remember most was fixing breakfast in bed for our mother. For some reason, in those days, eating breakfast in bed was regarded as something special. I have no idea why. For me, the practice is associated with being sick, as back then children were expected to recuperate in bed for a ridiculously long time. We even had a special tray, with games imprinted on it, for sick-in-bed meals. Why a healthy adult would voluntarily eat a meal in bed is still beyond my comprehension.

We sometimes had outings on Mother's Day, and otherwise just did our best to make sure that at the end of the day Mom was in no doubt that she was a mother many times over.

Father's Day, in June, was also low-key, although it was a bit more exciting in the years when it coincided with my brother's birthday.

Independence Day was, like Memorial Day, an occasion for parades and speeches. Our neighborhood usually had its own parade, with decorated bicycles and scooters. Occasionally we would go somewhere to see a public fireworks display, which wasn't anything like the spectacular events seen these days; nor did ordinary people generally have fireworks. Sometimes we had sparklers, and the little black dots that burned into "snakes" when you lit them. One time our neighbors had imported some mild fireworks from a state where they were legal, and we enjoyed them—all but my mother, who protested by staying inside and playing the 1812 Overture loudly on our record player (which, by the way, was monophonic).

August was entirely bereft of holidays, though we kids were busy squeezing the last drops out of our summer vacation from school. Since Labor Day was always on a Monday even before the Monday holiday bill came into being, and school always started right after that, the week or two beforehand was a favorite time for family vacations. This holiday was completely divorced from what it was intended to honor; I think I was in college, or even later, before I made the connection with the labor movement and unions.

October 12 was Columbus Day, as it will always be for me. Its chief value was in being a day of vacation. I could tell you that "In fourteen hundred and ninety-two, Columbus sailed the ocean blue," and that his boats were the Niña, the Pinta, and the Santa Maria, but that's about it.

Now Hallowe'en, that was a children's holiday! We didn't have it off from school, unless it fell on a weekend—and if it did, our schools were certain to celebrate it anyway. Costumes—usually homemade, often very clever—a parade around the school, and no doubt some special treats were the order of the day. Parents were invited to watch the parade, which was almost always held outdoors. Most of the kids walked to school, and most had parents at home who could come. Some costumes obviously had more parental help than others, but none that I recall were store-bought, nor were there any of the outlandish, sexualized, and violent costumes I've seen today—or even 35 years ago when I watched Hallowe'en parades at our own children's elementary school. Today's society would no doubt be horrified, however, at our Indians with war paint and bows and arrows, our cowboys and soldiers with toy guns, and our knights with swords.

At night, trick-or-treating was nothing like it is today. For one thing, there wasn't nearly as much loot, since we were restricted to our own neighborhoods, and most households gave our much smaller quantities of treats than is common today. None of this business of parents driving their kids all over to increase their hauls, no trunk-or-treat, no candy distributed at businesses and malls; there was little commercial about it. But we sure had fun, and much more freedom, being turned loose to roam freely within the set bounds of our neighborhood, without regard for darkness or danger or costumes that were difficult to see out of and were not festooned with reflective tape. Younger children went trick-or-treating with their parents—who had the grace to stay in the street while the children rang the doorbells on their own—or more likely, older siblings, who tended to stick a little closer in hopes some kind neighbor would offer the chaperones some candy, too. Back home, we'd gleefully sort through our haul, occasionally trading with siblings, without any concerned parents checking it out first. And of course we ate far too much candy. Only the oldest of my brothers had the strength of will to ration his; the rest of us finished ours up within a week, but he usually had some left in the freezer until the following Hallowe'en.

Most of the time, the creation of my costume was a father- and/or mother-daughter collaboration that I looked forward to all year. Offhand, I remember being a clown, a cuckoo clock, a salt shaker (to go along with my best friend, the pepper shaker), a parking meter, and a medieval knight, among others that will not immediately come to mind. After elementary school, my Hallowe'en costume days petered out, except for one year after we moved to the Philadelphia area and a group of my friends persuaded me to make the rounds with them. That's when I discovered why they were still clinging to childish pursuits: we were in a wealthier neighborhood, where rich people gave out full-sized candy bars!

Another treasured family project was carving pumpkins into jack-o-lanterns. We used real knives to cut as soon as we were responsible enough to handle them, and always illuminated our creations with candles, even though a finger or hand was bound to be mildly burned in the lighting process. Often we kept the seeds when we hollowed out the pumpkins, salting and roasting them. It was so much fun!

But there was a worm in the apple: One year, when I was at a very tender age, our jack-o-lanterns were set outside on our porch, as usual. A gang of teenage boys came rampaging through the neighborhood and viscously smashed our creations. It was heartbreaking. I still remember the sound of their stomping feet on the porch, and their gleeful yells.

On the brighter side, with some help from my mother, I once created a Hallowe'en party for my friends, with a "haunted house" in the basement, games, a craft, food, and watching Outer Limits on our little, black and white television set. (I've set the video to show just the opening theme. If you happen to watch the whole thing, and get hooked, Part 2 is here.)

As with the best holidays, there was good food, not just candy. Apple cider—real apple cider straight from the farm, unfiltered and unpasteurized, a delight that few know today. Sometimes cold, sometimes hot and mulled, depending on the weather, which at Hallowe'en in Upstate New York could be just about anything. Apples themselves, tart and delicious, of varieties difficult to impossible to find today. My mother's homemade pumpkin cookies! And pumpkin bread! A plate of cinnamon-sugar donuts, sometimes homemade but often store-bought and nonetheless delicious. Sometimes popcorn, too.

Thanksgiving. We frequently had guests for Thanksgiving dinner. My father's parents lived 200 miles away, and while it wasn't the three-hour trip it is today, it was short enough for us to get together for Thanksgiving. If it wasn't my grandparents sharing our Thanksgiving dinner, it was friends, and sometimes both. The meal was pretty standard: typically turkey, mashed potatoes, gravy, stuffing, sweet potatoes, peas, creamed onions, Waldorf salad, cranberry sauce, and rolls, with pumpkin and mincemeat pies. Once we acquired a television set (which happened when I was seven years old), there were parades on TV in the morning for the kids, and football games in the afternoon for the men. The women, no doubt, were cooking! Much later, when we lived in Pennsylvania and had grown up a bit more, the annual "Turkey Bowl" in our own backyard attracted enough friends to make an exciting touch/tag football game in the crisp November afternoon.

And finally, the best for last: Christmas.

These days, there is a Great Divide in the way Christmas is celebrated: Christian and Secular. In my youth it was not so. Christian or not, we all knew the origins and history of the occasion, and everywhere—in stores, in schools, in the public square—Santa, reindeer, snowmen, Christmas trees, presents, Mary, Joseph, Jesus, animals around the manger, shepherds, and angels mingled happily together. Even the Star and the Three Wise Men worked their way out of their proper setting of Epiphany to join the joyous throng.

I loved choosing and decorating our Christmas tree, especially the many years when we cut our own. Christmas tree farms back then were not what they are now, with their carefully-shaped trees in neatly-planted rows. Each tree had its own personality, and we often had a choice among several varieties. Finding our special tree was an adventure I looked forward to every year. The freedom of choice, and cutting the tree ourselves, were important to me. But somehow I never minded when we ended up adopting orphan trees: those chosen and cut down by other customers, then abandoned after some flaw was discovered. Our hearts went out to the poor things, often beautiful in our eyes. And our decorations easily accommodated any flaws.

Tree decorating in our household followed a standard pattern. After trimming the branches to his satisfaction, my father would set the tree in a large can (#10 comes to mind, but I can't be sure) that he filled with sand and mounted in a wooden frame that he had made. It was placed on a sheet and dressed in a homemade Christmas tree skirt. At that point, he put the light strings on. The lights were multi-colored, and much larger than the tiny lights that later became popular. Unlike the practice that continues in Switzerland today, our lights were not real, lighted candles. But burns were still possible: those incandescent bulbs could get quite hot, and Dad had to be careful with their placement.

As soon as that was done, the whole family went to town on the tree! Decorating was a joyous family affair. Each year we created anew popcorn strings, using red string and large-eyed needles. These went on first, after the lights. (Birds enjoyed the popcorn after the tree was taken down.) We had plastic ornaments that were put on the lower levels, where toddlers could reach. We had lovely glass ornaments for higher places. We had an ornament handmade by my grandmother, and several made by young children. Atop the tree was either a star with a light in it, or a glass spire, depending on our mood. The pièce de la résistance? Draping the branches with "icicles." These are hard to explain if you haven't seen them, but they were an essential part of our beautiful trees. Here's a description I found on Reddit that explains them well.

Growing up in the 50s and 60s, there were two types of "tinsel" (we called them "icicles"), the crinkly kind that was metallic, and the plastic kind that was coated with shiny silver. The crinkly kind, which I assume was the lead type, were a tad heavier so they hung straight, while the wispy plastic type was shinier and might fly around a bit. I remember once the static electricity caused them to sway when I walked right near the tree. You had to put these on one strand at a time, which was tedious. Taking them off was also an issue, you could never get all of them off. Both types seemed to fade in popularity and garland tinsel became more common by the 80s. As artificial trees became more common, "icicles" became less practical, and even garland seemed to fall out of favor. "Icicles" looked best on an open-style Balsam Fir type of tree, and not so good on fuller trees like a Scotch Pine and Douglas Fir.

Even our family became less enthusiastic about icicles when the lead kind was replaced by the plastic, which we considered a very inferior substitute. Not the same thing at all! We did (usually) wash our hands after handling the lead....

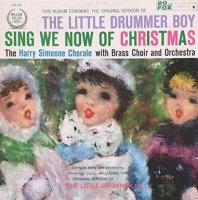

I haven't mentioned music, which was always an important part of the season. Everyone knew the standard Christmas carols back then, and just as with the displays, Silent Night, O Little Town of Bethlehem, and O Come, All Ye Faithful mingled happily with Jingle Bells and Rudolph the Red-Nosed Reindeer. We sang at home, we sang in school, we sang at community events. Instead of a solitary volunteer manning a red kettle and ringing an annoying bell, the Salvation Army band treated passersby to carols in excellent brass arrangements. And of course we played our favorite Christmas records while decorating our tree. One of my favorites was Sing We Now of Christmas, with the Harry Simeone Chorale. Although the album cover featured on this YouTube playlist is different, it has the exact songs from our record, and I was thrilled to discover it.

I haven't mentioned music, which was always an important part of the season. Everyone knew the standard Christmas carols back then, and just as with the displays, Silent Night, O Little Town of Bethlehem, and O Come, All Ye Faithful mingled happily with Jingle Bells and Rudolph the Red-Nosed Reindeer. We sang at home, we sang in school, we sang at community events. Instead of a solitary volunteer manning a red kettle and ringing an annoying bell, the Salvation Army band treated passersby to carols in excellent brass arrangements. And of course we played our favorite Christmas records while decorating our tree. One of my favorites was Sing We Now of Christmas, with the Harry Simeone Chorale. Although the album cover featured on this YouTube playlist is different, it has the exact songs from our record, and I was thrilled to discover it.

During my young childhood, my family went reasonably regularly to church—a small Dutch Reformed church in tiny Scotia, New York. We did not, however, go to church on Christmas. Christmas Eve and Christmas morning were strictly family time.

Christmas Eve. What do I remember about Christmas Eve? Chiefly that my father always read "A Night Before Christmas" (aka "A Visit from St. Nicholas") just before we children went to bed. My parents stayed up late wrapping and assembling gifts, but for me it was all about anticipation. Back then, Christmas was not even thought of (except by those needing to mail overseas packages) before Santa appeared at the end of the Macy's Thanksgiving Day parade, and the month between then and Christmas seemed to me to stretch half a year. Since then, that time period has somehow shrunk to about half a week, even though the "Christmas season" now starts before Hallowe'en.

In my earliest years, we did not have a fireplace, and hung our stockings on our bedroom doorknobs. Somehow, Santa managed without a chimney.... When we moved to a house with fireplaces, the stockings, as I recall, still didn't hang in front of them. You see, we children were allowed to wake up very early and open our stockings; there was some lower limit to the hour, but it was early enough to please us and late enough to give our parents some much-need additional sleep. But we were not allowed to peek at the Christmas tree—so our stockings were hung on an upstairs railing.

I don't know when the gift inflation started, though it is undeniable. Our stockings were rather small—I remember mine being one of my father's old hiking boot socks—and did not hold a lot, but I don't ever remember being disappointed. (Oh yes; there was one year that I was. At one point my mother, in a bit of exasperation at my never-ending Christmas wish list, exclaimed, "You want the world with a string around it!" So I put that on my list. Lo and behold, in my stocking was a small bank in the shape of a globe, and my parents had attached a string to it. Today, I recognize it as a clever joke, but at the time I was bitterly disappointed that Santa had so misunderstood my request.) In addition to small toys and candy, in the toes of our stockings were always a small coin and a tangerine.

Our own children had huge stockings, hand knit by Porter's mother; they were always stuffed full, and the stocking gifts even spilled over onto the floor. Part of this was no doubt because we always had guests with us for Christmas, and everyone wanted to be Santa. Part was because societal expectations had greatly increased. I was aware of the inflationary pressure, and knew it was dangerous, but had very limited success in fighting it.

On Christmas morning, after we children had opened our stockings and spent some time playing with the toys inside, we were allowed to invade our parents' bedroom and show them our treasures, bringing their own stockings to them.

Next on the agenda was breakfast. I don't recall anything particularly special about Christmas breakfast, only that our parents took an unconscionable long time drinking their coffee! Eventually we persuaded them to finish their drinks in the living room, where the tree was. What a wonder! If there weren't as many presents there as our own children experienced, it certainly seemed an abundance to me. Especially after the family grew to six people. One thing I think we did better with our own children was our practice of opening only one gift at a time, so that everyone could enjoy everything. When I was growing up, my father often passed out gifts to multiple people simultaneously, so sometimes we missed seeing other people opening their presents. It did keep the event from lasting all day, however.

The rest of the day was glorious, as we relaxed and enjoyed all our gifts. Except, of course, for my mother, who spent time fixing Christmas dinner. Unlike Thanksgiving and Easter, the menu wasn't fixed: sometimes turkey, sometimes ham, often roast beef, but always something special.

I didn't discover until much later the joys of being in a church that celebrates the Church Year, where Christmas is not a day but a whole season, of 12 days—until Epiphany. I had happily sung, "The Twelve Days of Christmas" all my life without ever thinking about what that meant. So in our family the Christmas tree usually came down around New Year's Day. Nonetheless, for us children the holiday lasted nearly 12 days, as any time we had off from school was a holiday to us.

And that's a look at the year's holidays as I remember them from my youth. I hope some of you have enjoyed this look into the past as much as I did recalling it.

Permalink | Read 2846 times | Comments (2)

Category Children & Family Issues: [first] [previous] [next] [newest] Everyday Life: [first] [previous] [next] [newest] Glimpses of the Past: [first] [previous] [next] [newest]

I found the following list in in The Art of Manliness, a publication I rarely read, but have respect for, and not just because their site is hosted by our own Lime Daley, which also hosts this blog. Their article reprints The Children’s Morality Code for Elementary Schools from 1926, which is old enough that I have no qualms about reproducing it here. You're unlikely to see these rules for being a good American in any public elementary school today, more's the pity. I believe I can heartily endorse all the precepts, except for the penultimate, XI-2: I will be loyal to my school. I supposed one has to expect that, given that this list was intended for school children, but I see no particular reason for loyalty to a school any more than to a favorite grocery store or brand of jeans.

As for the rest of them, I say we should bring them back, beginning with our politicians.

THE ELEMENTARY MORALITY OF CIVILIZATION

Boys and girls who are good Americans try to become strong and useful, worthy of their nation, that our country may become ever greater and better. Therefore, they obey the laws of right living which the best Americans have always obeyed.

I. THE LAW OF SELF-CONTROL

GOOD AMERICANS CONTROL THEMSELVES

Those who best control themselves can best serve their country.

1. I will control my tongue, and will not allow it to speak mean, vulgar, or profane words. I will think before I speak. I will tell the truth and nothing but the truth.

2. I will control my temper, and will not get angry when people or things displease me. Even when indignant against wrong and contradicting falsehood, I will keep my self-control.

3. I will control my thoughts, and will not allow a foolish wish to spoil a wise purpose.

4. I will control my actions. I will be careful and thrifty, and insist on doing right.

5. I will not ridicule nor defile the character of another; I will keep my self-respect, and help others to keep theirs.

II. THE LAW OF GOOD HEALTH

GOOD AMERICANS TRY TO GAIN AND KEEP GOOD HEALTH

The welfare of our country depends upon those who are physically fit for their daily work. Therefore:

1. I will try to take such food, sleep, and exercise as will keep me always in good health.

2. I will keep my clothes, my body, and my mind clean.

3. I will avoid those habits which would harm me, and will make and never break those habits which will help me.

4. I will protect the health of others, and guard their safety as well as my own.

5. I will grow strong and skillful.

III. THE LAW OF KINDNESS

GOOD AMERICANS ARE KIND

In America those who are different must live in the same communities. We are of many different sorts, but we are one great people. Every unkindness hurts the common life; every kindness helps. Therefore:

1. I will be kind in all my thoughts. I will bear no spites or grudges. I will never despise anybody.

2. I will be kind in all my speech. I will never gossip nor will I speak unkindly of any one. Words may wound or heal.

3. I will be kind in my acts. I will not selfishly insist on having my own way. I will be polite: rude people are not good Americans. I will not make unnecessary trouble for those who work for me, nor forget to be grateful. I will be careful of other people’s things. I will do my best to prevent cruelty, and will give help to those who are in need.

IV. THE LAW OF SPORTSMANSHIP

GOOD AMERICANS PLAY FAIR

Strong play increases and trains one’s strength and courage. Sportsmanship helps one to be a gentleman, a lady. Therefore:

1. I will not cheat; I will keep the rules, but I will play the game hard, for the fun of the game, to win by strength and skill. If I should not play fair, the loser would lose the fun of the game, the winner would lose his self-respect, and the game itself would become a mean and often cruel business.

2. I will treat my opponents with courtesy, and trust them if they deserve it. I will be friendly.

3. If I play in a group game, I will play, not for my own glory, but for the success of my team.

4. I will be a good loser or a generous winner.

5. And in my work as well as in my play, I will be sportsmanlike—generous, fair, honorable.

V. THE LAW OF SELF-RELIANCE

GOOD AMERICANS ARE SELF-RELIANT

Self-conceit is silly, but self-reliance is necessary to boys and girls who would be strong and useful.

1. I will gladly listen to the advice of older and wiser people; I will reverence the wishes of those who love and care for me, and who know life and me better than I. I will develop independence and wisdom to choose for myself, act for myself, according to what seems right and fair and wise.

2. I will not be afraid of being laughed at when I am right. I will not be afraid of doing right when the crowd does wrong.

3. When in danger, trouble, or pain, I will be brave. A coward does not make a good American.

VI. THE LAW OF DUTY

GOOD AMERICANS DO THEIR DUTY

The shirker and the willing idler live upon others, and burden fellow-citizens with work unfairly. They do not do their share, for their country’s good.

I will try to find out what my duty is, what I ought to do as a good American, and my duty I will do, whether it is easy or hard. What it is my duty to do I can do.

VII. THE LAW OF RELIABILITY

GOOD AMERICANS ARE RELIABLE

Our country grows great and good as her citizens are able more fully to trust each other. Therefore:

1. I will be honest in every act, and very careful with money. I will not cheat nor pretend, nor sneak.

2. I will not do wrong in the hope of not being found out. I can not hide the truth from myself. Nor will I injure the property of others.

3. I will not take without permission what does not belong to me. A thief is a menace to me and others.

4. I will do promptly what I have promised to do. If I have made a foolish promise, I will at once confess my mistake, and I will try to make good any harm which my mistake may have caused. I will speak and act that people will find it easier to trust each other.

VIII. THE LAW OF TRUTH

GOOD AMERICANS ARE TRUE

1. I will be slow to believe suspicions lest I do injustice; I will avoid hasty opinions lest I be mistaken as to facts.

2. I will stand by the truth regardless of my likes and dislikes, and scorn the temptation to lie for myself or friends: nor will I keep the truth from those who have a right to it.

3. I will hunt for proof, and be accurate as to what I see and hear; I will learn to think, that I may discover new truth.

IX. THE LAW OF GOOD WORKMANSHIP

GOOD AMERICANS TRY TO DO THE RIGHT THING IN THE RIGHT WAY

The welfare of our country depends upon those who have learned to do in the right way the work that makes civilization possible. Therefore:

1. I will get the best possible education, and learn all that I can as a preparation for the time when I am grown up and at my life work. I will invent and make things better if I can.

2. I will take real interest in work, and will not be satisfied to do slipshod, lazy, and merely passable work. I will form the habit of good work and keep alert; mistakes and blunders cause hardships, sometimes disaster, and spoil success.

3. I will make the right thing in the right way to give it value and beauty, even when no one else sees or praises me. But when I have done my best, I will not envy those who have done better, or have received larger reward. Envy spoils the work and the worker.

X. THE LAW OF TEAM-WORK

GOOD AMERICANS WORK IN FRIENDLY COOPERATION WITH FELLOW-WORKERS

One alone could not build a city or a great railroad. One alone would find it hard to build a bridge. That I may have bread, people have sowed and reaped, people have made plows and threshers, have built mills and mined coal, made stoves and kept stores. As we learn how to work together, the welfare of our country is advanced.

1. In whatever work I do with others, I will do my part and encourage others to do their part, promptly.

2. I will help to keep in order the things which we use in our work. When things are out of place, they are often in the way, and sometimes they are hard to find.

3. In all my work with others, I will be cheerful. Cheerlessness depresses all the workers and injures all the work.

4. When I have received money for my work, I will be neither a miser nor a spendthrift. I will save or spend as one of the friendly workers of America.

XI. THE LAW OF LOYALTY

GOOD AMERICANS ARE LOYAL

If our America is to become ever greater and better, her citizens must be loyal, devotedly faithful, in every relation of life; full of courage and regardful of their honor.

1. I will be loyal to my family. In loyalty I will gladly obey my parents or those who are in their place, and show them gratitude. I will do my best to help each member of my family to strength and usefulness.

2. I will be loyal to my school. In loyalty I will obey and help other pupils to obey those rules which further the good of all.

3. I will be loyal to my town, my state, my country. In loyalty I will respect and help others to respect their laws and their courts of justice.

Permalink | Read 2094 times | Comments (1)

Category Children & Family Issues: [first] [previous] [next] [newest] Inspiration: [first] [previous] [next] [newest] Here I Stand: [first] [previous] [next] [newest]

For another of the numerous projects that overflow my cup of time, I was perusing my post of almost a decade ago, A Dickens of a Drink, in which I lament the loss of a favorite drink from the old Kay's Coach House restaurant in Daytona Beach. Although the kindly bartender responded to our family's enthusiasm and my youthful pleas by writing out the recipe, I was never able to acquire many of the ingredients. Even today, with Google and the vast resources of the Internet to help, a search for "Bartender's Coconut Mix" brings up only a sponsored handful of coconut liqueurs—and my own post. Cherry juice was not something available in grocery stores back then, and I'd never heard of grenadine.

As I have occasionally been doing recently, as part of my AI Adventures, I asked Copilot to analyze the text of my old post. As part of its response, it asked, "Would you like help modernizing the Tiny Tim recipe for today’s ingredients?" What an idea! Well, nothing ventured, nothing gained, and here's what it came up with (click image to enlarge):

I am looking forward to trying this out on a smaller scale. I'm sure I can find all the ingredients. A quick reflection makes me question some of the proportions, but it's a great place to start. Maybe that's what an AI tool should be all about: Begin with a well-researched base, then add the human element (experiment and taste) to make it real.

Permalink | Read 2367 times | Comments (1)

Category Children & Family Issues: [first] [previous] [next] [newest] Everyday Life: [first] [previous] [next] [newest] Food: [first] [previous] [next] [newest] AI Adventures: [first] [previous] [next] [newest]

The RobWords YouTube channel is often interesting, but this one will resonate strongly with some of my readers, who have long known that babies are geniuses, and not just in language. It starts out basic, but then gets into some fascinating cutting-edge research, such as

- Babies in the womb can tell the difference between one language and another.

- Four-month-old babies can tell different languages apart without hearing them, by watching the speaker's lips.

- Babies use a complex statistical process to figure out word boundaries.

- Children figure out grammar patterns before age two, e.g. children brought up in an English-speaking environment have by then already learned that word order is important.

Two questions this short explanation raises in my mind:

- What does the importance of lip-watching in language development mean for children born blind, and for those whose view of the speaker's lips was obscured during that critical time by a face mask? (I know a speech therapist who was exceedingly frustrated by trying to work with children who could not see her mouth thanks to COVID restrictions.)

- For babies to learn words, then phrases, then sentences may be the most common pattern, but I find fascinating that one of our grandchildren—whose speech and grasp of language is top-notch—did the process in reverse, i.e. to all appearances, he learned complete sentences first, then figured out how to break them down into smaller parts.

Permalink | Read 913 times | Comments (0)

Category Children & Family Issues: [first] [previous] [next] [newest] YouTube Channel Discoveries: [first] [previous] [newest]

When I was young, stories for children about sports had one theme in common: sportsmanship. In fact, that was the main reason given for the existence and importance of sports: taming the instincts of aggression and domination into tools for the betterment of all areas of society, including the protection of women and children. A coach's job was to build a winning team, sure, but his most important job was to build boys into men. With minor modifications, that works as well for girls and women.

Today we have a win-at-any-cost mentality that poisons sports, politics, and every other area of life. Martin Luther King, Jr.'s dream that people would be judged by the content of their character loses its soul when character no longer matters.

I don't understand how people can live with themselves whose victory comes from not playing by the same rules as their opponents.

I hear that the CDC is recommending that anyone travelling abroad get vaccinated for measles. No matter where they're going.

"Never had it, never will." (Are you old enough to remember that 7-Up commercial?)

If my doctor recommended testing as part of my annual blood draw, and Medicare would pay for it, I might consider checking to see if my antibody response is still robust after all this time. After all, it has been a few years since I had the measles.

As it turns out, the CDC is okay with that. If you dig down just a little from the scary news stories and read what the CDC actually says, they acknowledge that if you've had measles in the past, you're good to go.